Combining multiple graphics cards to split the workload and improve performance sounds good on paper. In reality, multi-GPU builds in gaming are long gone.

The traditional SLI, NVLink, and Crossfire technologies did boost the FPS but the price-to-performance ratio wasn’t satisfactory enough.

Along came the issue of micro stuttering, heat management, and excessive power requirement. So, most gamers switched back to a single GPU build, and the technologies were discontinued.

But computers aren’t just for games, are they? Users involved in heavy workloads, like mining, 3D rendering, complex simulations, and deep learning definitely benefit from multiple graphics cards!

Let’s discuss this in greater detail.

Why Multi GPU Is No Longer Relevant in Gaming

When multi-GPU was first introduced, it meant combining the task of two or more graphics cards to achieve higher frame rates in games.

However, things didn’t turn out as planned, and multi GPU for gaming is nearly dead today.

No More SLI/NVLink/Crossfire Support

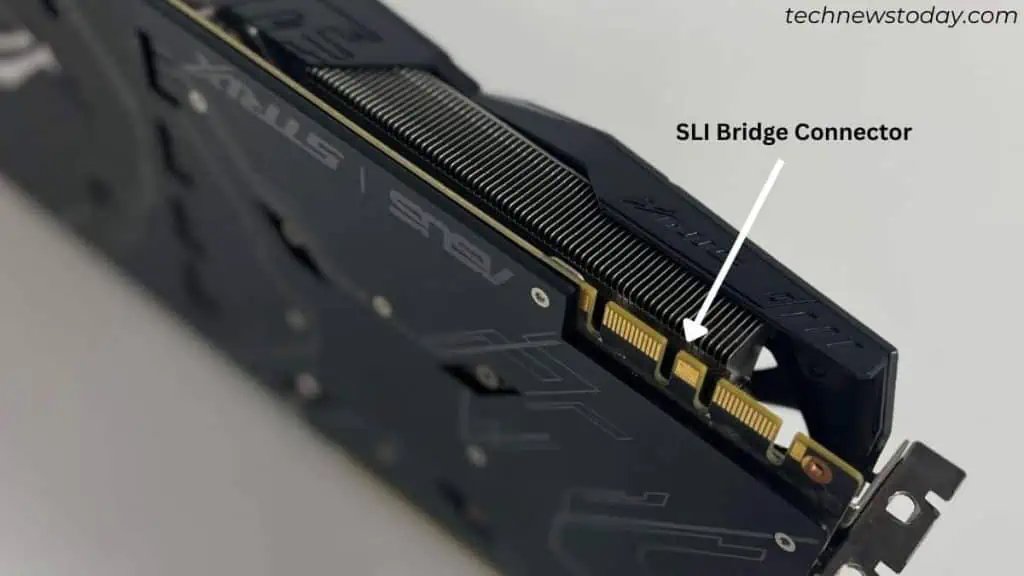

In older rigs, the motherboard had to be compatible with NVIDIA’s SLI or AMD’s CrossFire to simultaneously utilize two or more graphics cards.

Such technologies are now discontinued on the latest graphics cards for fair reasons and even motherboards do not support them.

Both NVIDIA and AMD have stopped providing the relevant drivers and you’ll also not find the dedicated bridge connector.

A few older cards still support such technologies through newer protocols like Vulkan and DirectX12. However, it’s all up to the game developers to allow mGPU compatibility in their respective games.

You may find a handful of ones, like Rise/Shadow of the Tomb Raider and Ashes of the Singularity that take advantage of multiple GPUs. But the majority of games aren’t compatible anymore.

Outperformed by Single Powerful GPU

Gaming on dual GPU combines the power of two graphics cards. So, in result you’re going to achieve a higher average FPS and resolution.

But the performance boost is only about 20% to 30% at double the price. And as I stated earlier, most games do not support SLI/Nlink/Crossfire. It will only utilize one graphics card, which becomes pointless.

The clear answer here is getting one better GPU instead of two low-priced cards.

Even for me, the latest RTX 4090 turned out to be 50% faster than two RTX 3090s (the only video card to support SLI in the 3000 series) when playing Cyberpunk 2077 at 4K resolution.

Mircostuttering Issues

So, the question is why do multiple GPUs not scale as expected?

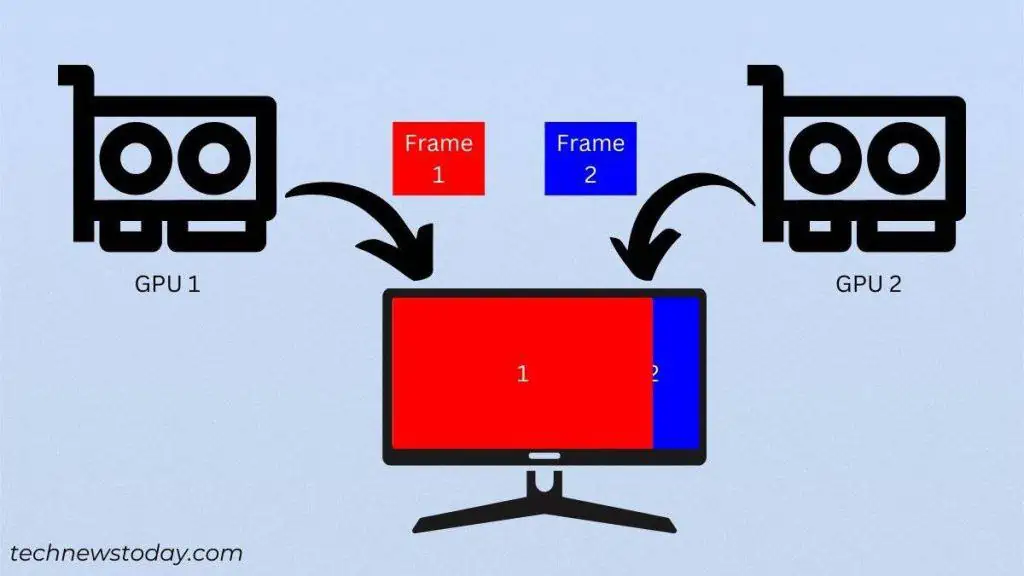

The SLI/Crossfire-enabled graphics cards used alternate frame rendering. Meaning that one GPU was responsible for rendering odd frames while the other rendered even.

To be 100% efficient, all the GPUs had to sync properly to display the frames in the right order. But during gaming, even novices experienced micro stuttering (irregular delays even though the FPS looked normal).

The thing is – the average FPS might increase in multi-GPU builds but the 1% lows remain the same, leading to horrible stutters. This was all due to poor synchronization between the different graphics cards.

VRM Shared Rather Than Added Up

With multiple GPUs, the amount of VRAM remains the same and doesn’t add up.

For example, if I use two 6 GB graphics cards, the capacity won’t increase to 12 GB. Each GPU’s frame buffer works independently.

So, if you were planning to play modern games at 4K (assuming the bare minimum VRAM requirement is 12 GB), it wouldn’t be sufficient.

Even in such a scenario, a single powerful GPU would be the best bet.

Use Cases Where Multi GPU Is Beneficial

SLI isn’t the only way to make use of multiple GPUs. You can certainly go for two or more graphics cards for distributed workloads without combining them as one.

I’m referring to users who are heavily involved in graphically intensive tasks.

For example, you can run an MMORPG game for long hours (at maxed setting) without stressing out one GPU and utilize the second video card for mining or 3D rendering.

Here are the fields where multi-GPU is beneficial:

- AI and Deep Learning: Perform simultaneous computations and distribute the workload among multiple cards.

- Complex simulations: Process more data in a shorter time than a single GPU system (for example, CFD simulation software).

- Mining: Offers high computational power but also requires a compatible motherboard and CPU.

- 3D Creation: Improves overall rendering performance and is suitable for modeling, motion tracking, rigging, animation, video editing, and game creation (examples: Blender with CUDA/OptiX/HIP and DaVinci Resolve).

- GPU Passthrough: Use one GPU for the host OS and others to run multiple virtual machines.

- Heavy Stock Trading: Usually not required but most enthusiasts like to run up to 6 monitors.

Installing Multiple Graphics Card? Consider These Factors

In distributed workloads, you can even combine separate graphics cards (which isn’t possible to render video games). Since this isn’t SLI or CrossFire, there’s no need for the same model.

However, there are plenty of factors you should check before installing multiple graphics cards:

- More graphics cards means the power requirement also increases. So, you’ll have to upgrade to a beefy PSU that can provide enough power to all the installed components.

- Make sure your motherboard has enough PCIe x16 slots and the CPU supports sufficient PCIe lanes.

- For three or more graphics cards, it’s best to go for a riser card.

- This also calls for utilizing specialized PC cases that have enough space for the GPU and one that has proper airflow.

- Heat management becomes difficult and your graphics card ends up overheating often. To prevent this, make sure to install enough case fans and maintain neutral pressure inside the chassis for balanced airflow.

- Finally, ensure that you’re installing all the video cards with enough VRAM capacity. Note that they won’t be combined!

Final Verdict

So, until and unless you’re not into heavy workloads with extensive graphics usage, there’s no need for multi-GPU.

For instance, if you’re only gaming and streaming, one powerful GPU is more than enough. Getting a dual monitor setup would be worth it rather than getting two separate graphics cards for individual purposes.

Even if you don’t wish to put more stress on your graphics card, you may utilize your CPU’s integrated graphics (if supported). Just make sure the graphics-intensive apps, like games, utilize dedicated graphics.